AI White Paper: Ethics Is All We Need

AI White Paper: Ethics Is All We Need By:

Sudhir Tiku ( Fellow AAIH & Editor AAIH Insights, AAIH Insights )

Roughly 3.7 billion years ago, something interesting happened. This is when life possibly began. It began in formats of single cell microbes in an environment, short of oxygen but rich in methane. Around 2.4 billion years, cyanobacteria emerged to make food using energy of the sun and water. This led to Great revolution of Oxygen, setting the stage for complex, specialized, and multicellular Life. From here, the march of life was unstoppable and around two hundred thousand years ago, homos sapiens, our distinguished alumnus arrived. Interestingly, Homo Sapiens is a Latin word which means “Wise Human”.

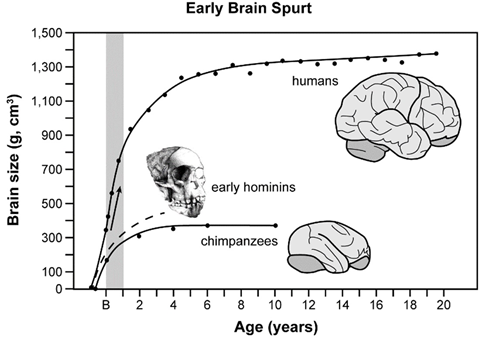

But who made us wise or Intelligent? In the evolutionary perspective, the human brain did that and diffentiated us from other species by acquiring outstanding cognitive capabilities e.g., abstract thinking, creativity, and language. This happened because brain is a hungry organ. It consumes 20% of our calorific intake and used this energy to offset evolutionary pressures, optimizing its size to the right size, tweaking not 98.8% but 1.2% of its DNA to stay efficient and most importantly staying plastic through firing, forging and connecting the hundred billion neurons it has, making our most brain the most efficient and experiential learning machine on planet earth. It is the same human brain, which acquires and applies knowledge and skills, that has created its alter ego- The artificial Intelligence. A scenario where machines and computers can first work, then learn, then reason and finally think like us. In technical terms there are, hence, four types of artificial intelligence: Reactive machines, Limited memory machines, Theory of mind and lastly self-awareness machines.

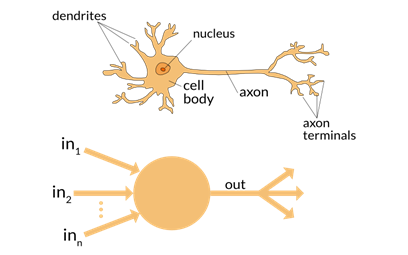

Let us look at some fundamentals as to why this can happen. Humans see available data and information and use logic, memory, and experience to solve problems and make decisions. This is a classical mathematical Input and output theorization and at the center of this activity is the biological neuron or the nerve cell. In artificial Intelligence, the neural node is the mathematical model or replica of a biological neuron. This node maps its input, multiplies it by a learned weight coefficient and generates an output value. When multiple nodes/layers are set up, the ensuing network is what we call Neural network as it mimics the behavior of human brain by allowing the computer program to recognize patterns and solve problems. Hence the Deep learning, which is higher form of machine learning, uses neural networks to imitate animal intelligence.

Let us look at some physics. A nerve impulse of a biological neuron can travel like 100m/s or 0.06 mile/sec while the electric fields associated with artificial neural networks can vary between 50 to 99% of speed of light. The speed of light is 3 x10*8 m/s or in miles it will be 186,282 miles/sec. That is lightning speed. Biological Neurons cannot renew themselves so once the neurons die in the brain, there is no replenishment. However, neural nodes are not chained to the mortality or immorality as they can be artificially engineered. The topics that can be handled by neural networks are almost infinite by designing the hidden layers which attached qualified bias to the inputs, learning on the way and creating an output.

Now with this background, let us take a telescopic view of 2040 AD. Artificial Intelligence is a reality and impact on societies and communities is heavy. We have manufacturing robots, autonomous or self-driving cars, offices with digital lakes, predictive and genetic health care management where organs can be grown, ageing is conquered, and one can possibly decide when to die. We have automated financial planning with digital tokens, augmented reality-based travel EXPERIENCE and beautiful looking humanoid robots with facial expressions. A new symbiotic social contract will be existing between humans and humanoid robots as they will work in our home, in our office and in our industry. Super intelligent bots will be part of every connected device, connected building and connected infrastructure. Social media will be media of fragmented identities where physical, virtual, and digital realities will merge triggering disruption and new business models. With 10 or 12G network, streaming will be three dimensional in format on ultra-thin plasma walls and content creation will be like surreal. Paper and paper currency would have disappeared. There would be massive disruption in food industry driven by AI and also helped by themes of responsible consumption, sustainability and climate change. So on that special Friday evening, the food served on your invisible fiber plate, by your personal humanoid, will be cell based healthy food but as sumptuous and tasty like the food you eat now. The babies of choice can be ordered from the stem labs in the city and the social relationship between man and woman can get redefined. Artificial Intelligence would touch every facet of life in 2040 AD and with decision making based on metadata, big data and predictability algorithms, Policing, Policy and Performance management would be more structured and hopefully fairer and more Inclusive. AI would decide who gets the next job and AI would predict the next typhoon much ahead saving life and property. This is good part of AI and in line with four principles of bioethics – beneficence, non-maleficence, autonomy and justice.

But There is a tough side of AI also and we have the moral responsibility to solve this. There is a chance that AI is coded on basis of considerations of inherent human bias and prejudice. If this happens on large scale, this kind of AI will destroy human collaboration, block human control and judgement, erode human interventions and integrations, debase human values and can make all of us lazy and boring. AI can also destroy our social connections by making us inextricably independent, leading to decay of already compromised values of ethics, equity and empathy. With AI unleashing hordes of need-based robots and compromised identities, communities can become a pawn in the hand of some AI algorithm. AI can go out of control, as some experts already say.

What can we do to avoid this from happening and is it possible?

For our life which hinges on AI, we can have a two-dimensional model. The first dimension of the model is to set the rules of coding now and make it ethical, and inclusive. The term Algorithmic bias has to be addressed and minorities, socially deprived classes and disadvantaged strata has to be given fair chance when AI decides on questions of impact based on the training data it sees, with no room for political or non-political pressure. We will have to create independent expert centers, institutions and quality gates which will ensure that technological innovation in AI is helping us to promote our security, dignity, legality, privacy; solidarity, prosperity and leave the final call of Implementation to us. To do this we have to hardwire ethics in our coding culture, education, and behavior now. The ethical framework can be:

- Behavior of the AI system should be in line with the predefined ethical design code.

- Regulatory framework should evaluate the ethical implications of AI as they replace traditional societal structures.

- Code of conduct, certifications and standards for ethical coding should be professionally enshrined so that Integrity of developed algorithms is not compromised.

The second dimension of the model could be to set AI free and let it evolve, like markets do believing that self-regulation and self-correction will kick in. However, since risks are extremely high this is not recommended.

There is no clear answer here but we as, Intelligent, and responsible human community, must decide and solve the riddle of AI now, not for our sake but for the sake of next generations.