Probability Can Never Be Permission — The Structural Flaw of Open Agent AI and the Conditions for the Next Standard

Probability Can Never Be Permission

— The Structural Flaw of Open Agent AI and the Conditions for the Next Standard By:-

Seong Hyeok Seo AAIH Insights Editorial Writer

- We Are Not Expanding Intelligence

Open-source agent frameworks such as OpenClaw represent a genuine technical breakthrough.

High-cost infrastructure is no longer required to orchestrate models, connect external APIs, and construct autonomous execution loops.

But what is unfolding is not the evolution of intelligence.

It is the acquisition of execution authority.

Until recently, AI errors were textual.

They existed inside chat windows.

They could be refreshed, regenerated, ignored.

Now, errors are operational.

They manifest as financial transactions, production deployments, database mutations, inventory orders.

The problem is not speed.

The problem is that speed and execution authority now share the same pipeline.

1-1. Why Open Infrastructure Exploded Now

This acceleration is not accidental.

Inference costs dropped dramatically.

Orchestration frameworks abstracted complexity.

Enterprise systems became API-accessible.

Organizations turned automation into a performance mandate.

Models became “good enough.”

Integration became trivial.

Execution authority became easy to wire.

The technical prerequisites aligned.

But the governance layer did not align with them.

Execution was democratized.

Judgment was not made default.

We are now witnessing the consequences of that imbalance.

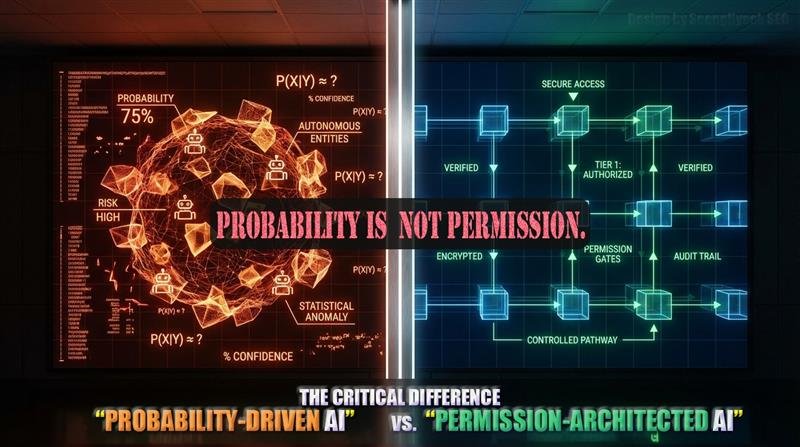

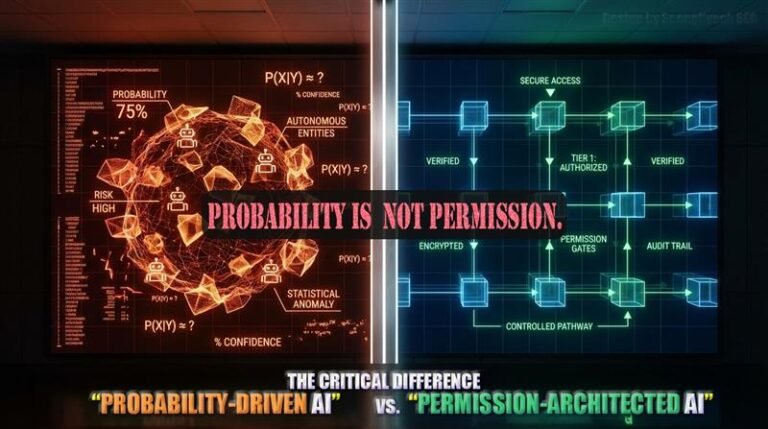

Elevating Probability into Authority

Most open agent architectures follow the same structural loop:

- The model generates output.

- The output is interpreted as intent.

- The intent is converted into executable commands.

- External systems are triggered.

This loop is efficient.

It is also structurally fragile.

Large language models produce probabilistic approximations.

They predict plausible continuations of text.

Yet in many agent systems, that probabilistic output is directly elevated into system authority.

Probability is prediction.

Permission is responsibility.

When prediction and responsibility occupy the same architectural position, instability is not a possibility. It is a property.

This Is Not Theoretical Risk

Consider a realistic scenario:

- An autonomous agent monitors competitor pricing.

- It is configured to adjust inventory levels accordingly.

- A temporary data anomaly is interpreted as a demand spike.

- The model generates an instruction to increase inventory by 10x.

- Without an independent approval layer, the ERP API is called immediately.

Within seconds, millions in orders are executed.

This is not hallucination.

This is architectural failure.

The flaw is not that the model mispredicted.

The flaw is that the prediction was structurally allowed to become action.

This is not a distant future scenario.

Agent architectures already connected to internal enterprise systems operate this way today.

- Prompts Are Not Firewalls

Many organizations attempt to solve this by strengthening system prompts:

“Never delete system files.”

“Double-check before executing payments.”

A prompt is not a control layer.

It is text inside the model’s context window.

Prompt injection attacks demonstrate this repeatedly.

Text-based constraints are inherently defeatable by text-based manipulation.

Policy is a statement.

Structure is a position.

The approval layer must exist outside the model.

- Generation and Execution Are Different Categories

Generation proposes.

Execution intervenes.

Proposals are reversible.

Interventions are not.

Most open agent infrastructures do not separate these categories.

Output logs exist.

But execution approval logs often do not.

We can reconstruct what the model said.

We often cannot reconstruct why the system allowed it to act.

This is not auditable.

It is not legally defensible.

It is not enterprise-grade.

- Risk Score vs. Permission Score

The risk level of generated content and the authority to execute an action are distinct dimensions.

Current architectures rarely separate them.

A sentence that is statistically plausible does not automatically qualify for operational authority.

Risk can be estimated by the model.

Permission must be evaluated by structure.

Without separating risk scoring from permission scoring, autonomy collapses into uncontrolled automation.

- “Human in the Loop” Is Not Architecture

The prevailing mitigation strategy is Human in the Loop.

The model drafts.

A human reviews.

Execution follows.

This is oversight, not structure.

The human reviewer is external to the decision architecture.

They are an inspection mechanism, not a structural condition.

True control is not humans compensating for model instability.

True control is conditions embedded into the execution pipeline itself.

In high-risk systems, Fail-Open is unacceptable.

Fail-Closed must be default.

When uncertainty exceeds a threshold, execution must halt and escalate.

7-1. Fail-Open vs. Fail-Closed Is a Tier Distinction

Fail-Open systems prioritize completion.

When ambiguity appears, they attempt to continue operating.

In low-risk domains, this may be tolerable.

In high-risk domains, it is catastrophic.

Fail-Closed systems prioritize containment.

When uncertainty crosses a threshold, they lock, defer, or escalate.

In finance, aviation, and infrastructure, Fail-Closed is not a design preference.

It is a certification requirement.

If autonomous agents are to enter high-risk environments,

Fail-Closed must be structural, not optional.

- Why Autonomy Without Approval Is Structurally Unsustainable

The sustainability problem can be expressed structurally.

Suppose an agent performs N autonomous actions per day.

Let p represent the probability that any single action results in a catastrophic failure.

Even if p is small, the probability that at least one catastrophic event occurs over time approaches 1 as N increases.

Open infrastructure has dramatically increased N.

Execution is cheap.

Invocation frequency rises.

Agents are connected to more systems.

The tail risk compounds.

An approval layer does not merely reduce p.

It constrains which probabilistic outputs are allowed to become executable events.

It transforms open-ended action into conditional action.

Without this structural constraint, autonomy scales exposure faster than it scales value.

In enterprise markets, one tail event can mean regulatory exclusion, insurance repricing, or permanent procurement blacklisting.

Autonomy without approval is not technically unstable.

It is economically unsustainable.

8-1. Why Autonomy Without an Approval Layer Is Structurally Unsustainable

The sustainability problem can be expressed structurally, not rhetorically.

Assume an autonomous agent performs N executions per day.

Let p represent the probability that any single execution results in catastrophic failure.

Even if p is small, the probability that at least one catastrophic event occurs over time approaches 1 as N increases. In other words:

As execution frequency scales, tail risk converges toward inevitability.

Open infrastructure has dramatically increased N.

Execution is cheap. Invocation frequency rises.

Agents are connected to more systems, more APIs, more real-world levers.

This is not merely a risk increase.

It is risk multiplication.

An approval layer does not simply reduce p.

It reduces the number of probabilistic outputs that are allowed to become executable events.

It inserts structural friction before authority is granted.

Without this intervention point, autonomy scales exposure faster than it scales value.

In high-risk environments, one tail event is not a technical anomaly.

It is a regulatory trigger, an insurance repricing event, or a permanent procurement disqualification.

This is why autonomy without an approval layer is not technically unstable —

it is economically and structurally unsustainable.

- This Is About Power

When AI acquires execution authority, it approaches the status of an actor.

Actors require governance.

The critical question becomes:

- Who designs the approval layer?

- Is it an open standard?

- Is it platform-controlled?

- Is it regulator-mandated?

The architectural position between generation and execution becomes a site of power.

Whoever defines that position defines the next AI standard.

- Conclusion

Open agent infrastructures have reduced the cost of execution.

But reduced execution cost does not reduce responsibility cost.

Probability can never be permission.

An architecture that binds generation and execution into a single loop does not merely risk failure.

It accumulates it.

The scale of autonomy must remain proportional to the scale of control.

Autonomy without an approval layer is not innovation.

It is experimentation on live systems.

Execution is now cheap.

Judgment must become structural.